full-stack-ai-agent-template's webhook service had a full read SSRF with response exfiltration

The webhook service in vstorm-co's full-stack-ai-agent-template accepted arbitrary URLs and stored HTTP responses in the database, creating a full read SSRF that could exfiltrate cloud metadata credentials. The fix adds DNS-aware URL validation at every code path.

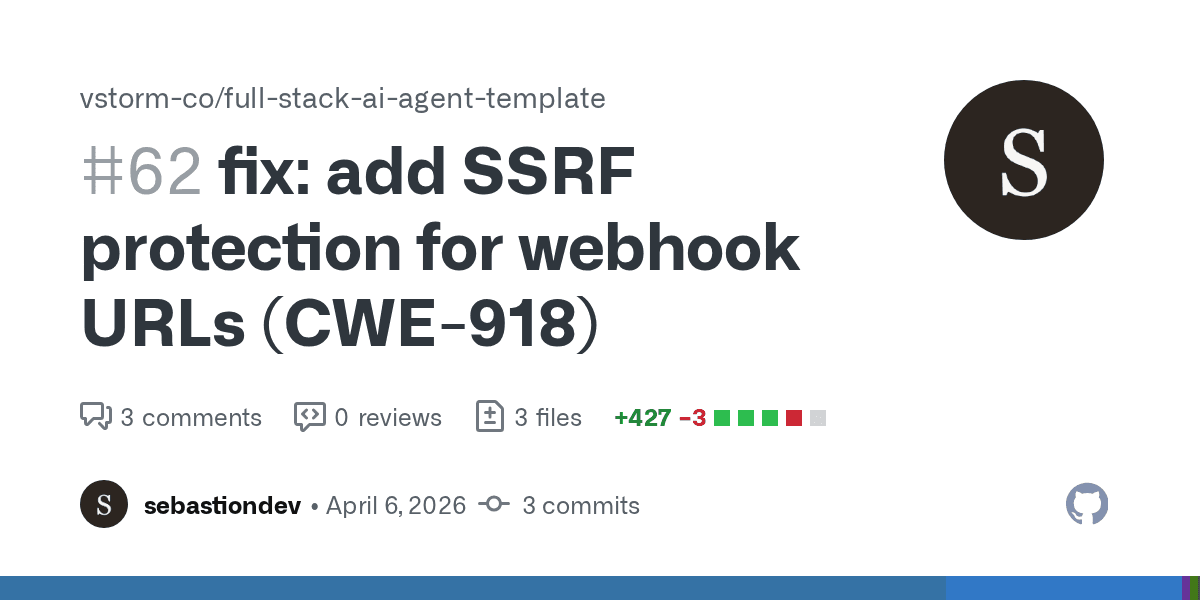

The webhook service in vstorm-co/full-stack-ai-agent-template accepted user-supplied URLs, made HTTP requests to them on behalf of the server and stored the response body in the database where the user could read it back. That is a textbook full read SSRF with response exfiltration, mapped to CWE-918. I identified the vulnerability, wrote a fix and submitted PR #62. It was merged on 9 April 2026.

What the template does

full-stack-ai-agent-template is a cookiecutter project scaffold for building AI agent applications. It generates a FastAPI backend with optional webhook support across three database backends: PostgreSQL (async), SQLite (sync) and MongoDB (async). When enable_webhooks is set to True during project generation, users can register webhook URLs via the REST API. When events fire, the service POSTs a JSON payload to each registered URL and records the delivery result.

The webhook feature is opt-in (disabled by default), but any project that enables it inherits the vulnerability.

The vulnerability

The data flow is straightforward. A user creates a webhook by POSTing a URL to the API. The URL passes through Pydantic's HttpUrl type, which validates that it is well-formed and uses an http or https scheme. No further validation occurs. The URL is stored in the database as-is.

When a webhook event fires, WebhookService._deliver() sends an HTTP POST to the stored URL using httpx.AsyncClient (or httpx.Client in the sync variant). The response body, up to 10KB, is written to a webhook_deliveries record. The user can then retrieve delivery results via GET /api/v1/webhooks/{id}/deliveries.

POST /api/v1/webhooks

→ create_webhook(data.url) → stored in DB

↓

Event triggers → _deliver() → httpx.post(webhook.url)

↓

response body stored in webhook_deliveries

↓

GET /api/v1/webhooks/{id}/deliveries → attacker reads response

An attacker with API access can register http://169.254.169.254/latest/meta-data/iam/security-credentials/ as a webhook URL. When the next event fires, the server fetches the AWS instance metadata endpoint, stores the response containing IAM credentials and hands them back through the deliveries API. The same technique works against any internal service: Redis on 127.0.0.1:6379, an admin panel on 10.0.0.1:8080, the Kubernetes service discovery endpoint at http://kubernetes.default.svc/.

The critical detail is the response storage. Many SSRF vulnerabilities are blind: the attacker can trigger a request but cannot see the response. This one is not blind. The delivery record faithfully returns whatever the internal service responded with, turning the webhook system into a proxy for reading internal network resources.

Authentication narrows but does not close the window

With use_jwt=True (the default), webhook creation requires a valid admin JWT. This limits exploitation to compromised accounts or insiders. With use_jwt=False, there is no authentication at all: any anonymous request can register a webhook and read back delivery results. Both configurations are valid template outputs, and the SSRF exists in both.

What the fix does

The fix adds a new validate_webhook_url() function to app/core/sanitize.py and calls it at every point where a webhook URL enters or leaves the system.

URL validation

The validation function applies four checks in sequence:

Scheme validation. Only http and https are permitted. This blocks file://, gopher://, dict:// and other schemes that can be abused for SSRF.

Userinfo rejection. URLs containing credentials (http://user:pass@host/) are rejected outright. This prevents URL parsing ambiguities where the hostname extraction differs between the validator and the HTTP client, a known SSRF bypass technique.

IP literal blocking. If the hostname is an IP address, it is checked against Python's ipaddress module for is_private, is_reserved, is_loopback, is_link_local, is_multicast and is_unspecified. The fix also explicitly blocks the CGNAT range (100.64.0.0/10) because Python 3.11+ no longer classifies it as private or reserved, and it covers cloud metadata endpoints like Alibaba Cloud's 100.100.100.200.

_CGNAT_NETWORK = ipaddress.ip_network("100.64.0.0/10")

def _is_ip_blocked(ip_str: str) -> bool:

try:

addr = ipaddress.ip_address(ip_str)

except ValueError:

return True # Unparseable = blocked

return (

addr.is_private

or addr.is_reserved

or addr.is_loopback

or addr.is_link_local

or addr.is_multicast

or addr.is_unspecified

or addr in _CGNAT_NETWORK

)DNS resolution check. For hostnames that are not IP literals, the function resolves the hostname via socket.getaddrinfo() and validates that every returned address is public. This blocks DNS-based bypass techniques where a domain like localtest.me or 127.0.0.1.nip.io resolves to a loopback address. Every address in the DNS response must pass _is_ip_blocked(); if any fails, the URL is rejected.

A dedicated SSRFBlockedError exception type extends ValueError, avoiding fragile string matching when distinguishing SSRF blocks from other validation failures in upstream error handlers.

Three validation points per backend

All three database variants (PostgreSQL, SQLite, MongoDB) are patched identically at three code paths:

| Code path | Purpose |

|---|---|

create_webhook() | Block malicious URLs before they enter the database |

update_webhook() | Validate replacement URLs on update |

_deliver() | Re-validate at delivery time to defend against DNS rebinding |

The delivery-time check is the defence-in-depth layer. A DNS rebinding attack works by having a hostname resolve to a public IP at creation time (passing validation) and then switching the DNS record to an internal IP before the webhook fires. By re-resolving and re-checking at delivery time, the window for this attack is reduced to the gap between the second validation and the httpx request itself. That TOCTOU gap still exists, but it is measured in milliseconds rather than hours or days.

A code comment also documents that httpx defaults to follow_redirects=False, and explicitly warns future developers not to enable it. An open redirect on a public endpoint would otherwise allow an attacker to bypass the URL check entirely by redirecting to an internal address after validation passes.

The review cycle

The maintainer, DEENUU1, reviewed the initial PR and identified five areas for improvement. Three of them were substantive:

ValueError propagation. The original implementation raised ValueError from the validation function, which would propagate as an HTTP 500 at the API layer. The fix was revised to wrap SSRF and validation errors in the project's existing ValidationError exception via a _validate_url_or_raise_422() helper, producing a clean 422 response.

Blocking I/O in async paths. socket.getaddrinfo() is a blocking call. In the async PostgreSQL and MongoDB code paths, it blocks the event loop. The maintainer flagged this as a performance concern under high webhook volume. A TODO comment was added acknowledging the issue and suggesting loop.getaddrinfo() or run_in_executor() as future improvements. This is a pragmatic compromise: the security fix ships now, the performance optimisation follows.

Hardcoded port fallback. The original code defaulted to port 443 regardless of scheme. For http:// URLs, the correct default is 80. While getaddrinfo returns the same IP addresses regardless of port, the fix was updated to select the correct default based on scheme: 443 if parsed.scheme == "https" else 80.

The review also prompted the addition of a comprehensive test suite in tests/test_ssrf.py covering blocked IPs, blocked schemes, allowed URLs, DNS resolution mocking and edge cases like URLs with credentials.

Where this sits in the pattern

This is the latest in a recurring vulnerability class I have been finding across AI agent infrastructure in 2026. The pattern has a consistent shape: a framework accepts user input or LLM output, transforms it into a privileged operation (a database query, an HTTP request, a file system path) and does so with minimal validation because the developer assumed the input would be well-behaved.

In LightRAG's Memgraph backend, it was entity types interpolated into Cypher queries. In Hugging Face's skills framework, it was DuckDB queries built from string formatting. In nousresearch/hermes-agent, it was file paths with no traversal protection. Here, it is webhook URLs with no destination validation.

The common thread is not that these are difficult bugs. SSRF prevention is well-documented. The OWASP SSRF Prevention Cheat Sheet covers exactly this scenario. The common thread is that AI agent frameworks are being built at speed by developers focused on functionality, and the security properties that mature webhook implementations take for granted (IP allowlisting, DNS rebinding protection, response body isolation) simply are not on the checklist.

A cookiecutter template amplifies this. Every project generated from the template inherits whatever security posture the template had at generation time. There is no upgrade mechanism, no patch propagation. If a user generated a project before this fix, their webhook service has the SSRF, and they will have it until they manually port the changes. Template-based code generation distributes vulnerabilities at the same speed it distributes features.

The response body storage is what elevates this from a standard SSRF to something worth writing about. Most webhook implementations fire and forget, or at most log a status code. This one stored the response content and served it back through the API. That design decision, reasonable from a debugging perspective, turned an already dangerous vulnerability class into a reliable data exfiltration channel. The distance between "useful webhook diagnostics" and "cloud credential theft" was one missing validation function.

Newsletter

One email a week. Security research, engineering deep-dives and AI security insights - written for practitioners. No noise.