Anthropic shipped its entire source code to npm and the internet kept it forever

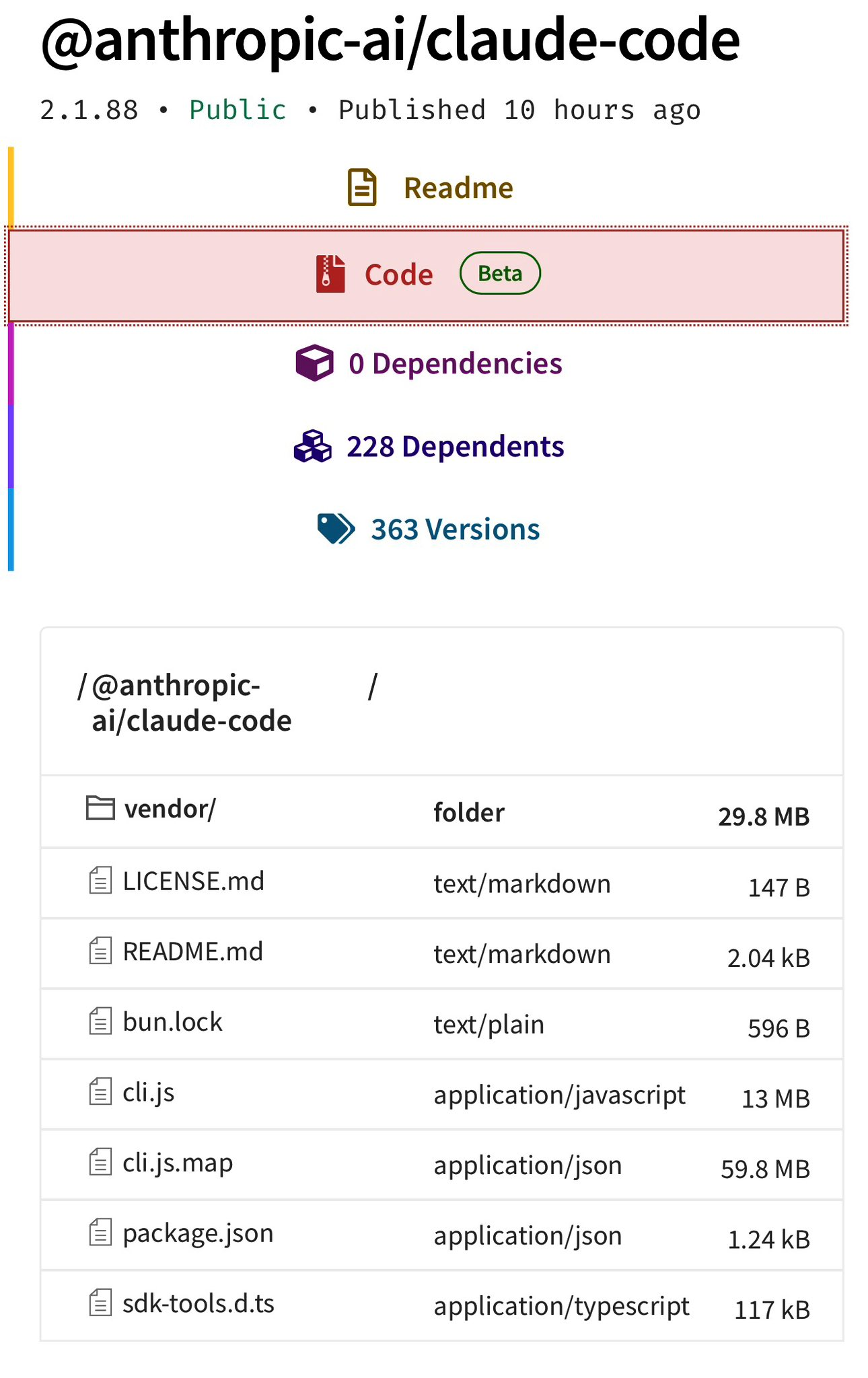

A 59.8 MB source map in Claude Code v2.1.88 exposed 512,000 lines of Anthropic's proprietary TypeScript to anyone with an npm account. Clean-room rewrites and decentralised mirrors made DMCA takedowns futile.

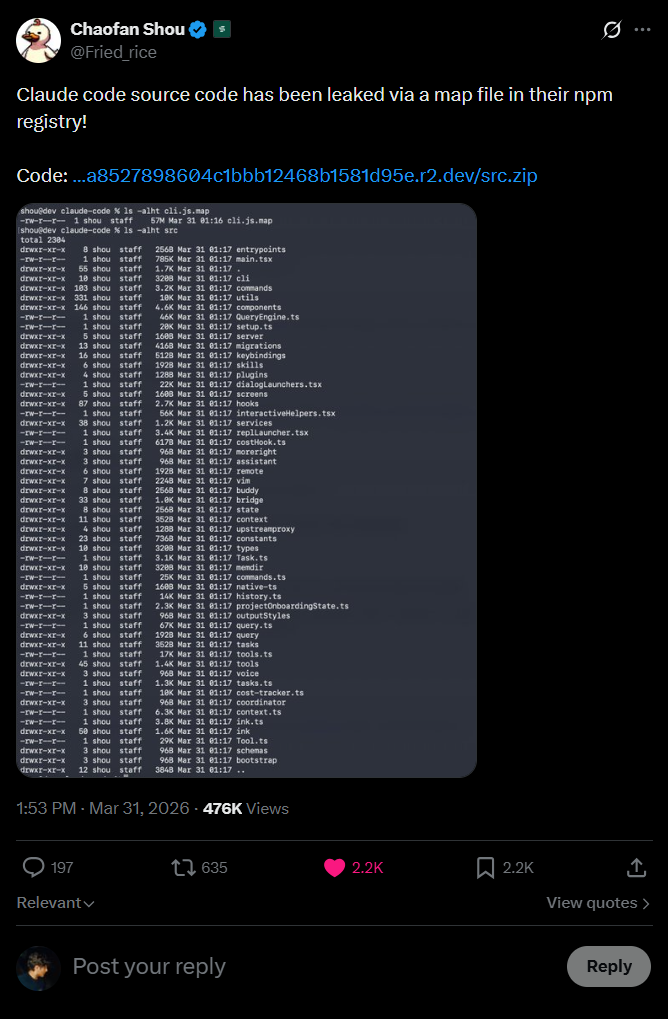

Anthropic pushed Claude Code v2.1.88 to the npm registry on the morning of 31 March 2026 with a 59.8 MB JavaScript source map bundled inside. That single file, cli.js.map, contained enough information to reconstruct approximately 512,000 lines of TypeScript across 1,900 files: the full internal architecture of what is arguably the most commercially successful AI coding agent on the market. By 04:23 ET, researcher Chaofan Shou had posted a download link on X.

Chaofan Shou's original post on X announcing the leak. Screenshot via Kuberwastaken/claude-code, which may be subject to DMCA takedown. By sunrise, the entire codebase was mirrored across GitHub, archived on decentralised platforms and being rewritten in Python from scratch.

Chaofan Shou's original post on X announcing the leak. Screenshot via Kuberwastaken/claude-code, which may be subject to DMCA takedown. By sunrise, the entire codebase was mirrored across GitHub, archived on decentralised platforms and being rewritten in Python from scratch.

This was not a breach. It was a packaging mistake. Bun's bundler generates source maps by default unless explicitly told not to. Someone forgot.

It was also the third time in six days that Anthropic had inadvertently exposed proprietary information to the public internet.

How a debug file became a strategic leak

Source maps exist to help developers debug minified JavaScript. They map compressed production code back to the original source. They are never supposed to ship with a public package. The fix is a single line in .npmignore or a bundler flag. Anthropic missed both.

The mistake is common enough that it barely warrants a headline by itself. What makes this incident significant is the scale of what was inside the map file. Claude Code is not a thin wrapper around an API. The leaked source reveals a multi-layered orchestration system: memory management, multi-agent coordination, OAuth flows, permission logic, feature flags and internal model routing.

The extracted file tree from the source map. Screenshot via Kuberwastaken/claude-code, which may be subject to DMCA takedown. VentureBeat, which obtained and reviewed the source, described it as "a complex, multi-threaded operating system for software engineering."

The extracted file tree from the source map. Screenshot via Kuberwastaken/claude-code, which may be subject to DMCA takedown. VentureBeat, which obtained and reviewed the source, described it as "a complex, multi-threaded operating system for software engineering."

Anthropic confirmed the leak in a statement: "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again."

They said the same thing last time. According to NDTV, citing Odaily, an early version of Claude Code was exposed via the same mechanism in February 2025. Anthropic removed the old version from npm and deleted the source map. Fourteen months later, the identical mistake shipped again.

What the source code revealed

The architectural details that leaked are not trivial. They represent proprietary engineering that, according to VentureBeat, underpins a product with an estimated $2.5 billion annualised recurring revenue.

Memory architecture

Claude Code uses a three-layer memory system designed to solve "context entropy", the tendency for long-running agent sessions to become confused as complexity grows. At its core is MEMORY.md, a lightweight pointer index (~150 characters per line) that sits permanently in context. It does not store data. It stores locations. Actual knowledge lives in topic files fetched on demand. Raw transcripts are never read back into context wholesale; they are searched via grep for specific identifiers. A "Strict Write Discipline" rule ensures the index only updates after a successful file write, preventing the model from polluting its own context with failed attempts.

The agent is also instructed to treat its own memory as a "hint" rather than ground truth, requiring verification against the actual codebase before acting on recalled information.

KAIROS: the always-on daemon

References to "KAIROS" (from the ancient Greek for "the opportune moment") appear over 150 times in the source. It represents an autonomous daemon mode where Claude Code operates as an always-on background agent rather than a reactive tool. The system includes autoDream, a memory consolidation process that runs while the user is idle: merging observations, resolving contradictions and converting vague insights into structured facts. A forked subagent handles these maintenance tasks to prevent the main agent's reasoning from being corrupted by housekeeping.

Undercover Mode

Buried in the source is a system specifically designed to prevent Anthropic's internal information from appearing in public repositories. The system prompt discovered in the leak explicitly warns the model: "You are operating UNDERCOVER... Your commit messages... MUST NOT contain ANY Anthropic-internal information. Do not blow your cover."

The subsystem scrubs model names, internal codenames and AI attribution from any public-facing git activity. Anthropic presumably uses this for internal dogfooding against open-source projects. The irony of a leak-prevention system being discovered via a leak has not been lost on anyone.

Internal model metrics

The source confirms internal codenames: Capybara maps to a Claude 4.6 variant, Fennec to Opus 4.6, and Numbat to an unreleased model still in testing. Internal comments reveal Anthropic is iterating on Capybara v8, which has a 29-30% false claims rate, a significant regression from the 16.7% rate in v4. An "assertiveness counterweight" was added to prevent the model from becoming too aggressive in code refactors.

For competitors, these metrics are a benchmark of the ceiling for current agentic performance, revealing exactly which failure modes Anthropic is still attempting to solve.

Unreleased features

The code contained 44 hidden feature flags covering functionality that has not been announced. Among them: Buddy, a Tamagotchi-style AI companion with 18 species, rarity tiers and stats including debugging, patience, chaos and wisdom. A teaser rollout was apparently planned for April 1-7.

DMCA versus the internet

Anthropic's legal response was swift. DMCA takedown notices hit GitHub mirrors within hours. But the response demonstrated, once again, why takedowns fail against distributed infrastructure.

Sigrid Jin, a developer previously featured in the Wall Street Journal for consuming 25 billion Claude Code tokens, sat down at 4 AM and rewrote the core architecture in Python using an AI orchestration tool. The resulting repository, claw-code, hit 30,000 GitHub stars faster than any repository in recorded history. It is a clean-room rewrite: a new creative work that translates architectural concepts without copying source code verbatim. Gergely Orosz, founder of The Pragmatic Engineer newsletter, noted on X that a clean-room rewrite "violates no copyright and cannot be taken down."

Meanwhile, the original TypeScript source was mirrored to Gitlawb, a decentralised git platform, with the message: "Will never be taken down." A separate repository compiled all of Claude's internal system prompts.

The copyright question gets murkier still. If significant portions of Claude Code were written by Claude itself, as Anthropic's own CEO has implied, then the legal standing of copyright claims is uncertain. The DC Circuit upheld in March 2025 that AI-generated works do not receive automatic copyright protection, and the Supreme Court declined to hear the challenge.

The same day, axios was compromised

The source leak would be notable on its own. What elevates it is the context.

Hours before the Claude Code source map went public, the axios npm package, the most popular JavaScript HTTP client with over 100 million weekly downloads, was hit by a supply chain attack. Two malicious versions (1.14.1 and 0.30.4) were published between 00:21 and 01:00 UTC on 31 March via a compromised maintainer account. The packages injected a hidden dependency, plain-crypto-js@4.2.1, whose sole purpose was a postinstall script that deployed a cross-platform remote access trojan.

According to Snyk's advisory (SNYK-JS-AXIOS-15850650), the dropper contacted a command-and-control server at sfrclak[.]com:8000 and downloaded platform-specific payloads: an AppleScript-based RAT for macOS that spoofed Apple daemon naming conventions, a VBScript downloader for Windows that masqueraded as Windows Terminal, and a Python RAT for Linux launched via nohup. The malware self-deleted after execution, replacing its own package.json with a clean version to evade forensic detection.

StepSecurity, which detected the compromise, described it as "among the most operationally sophisticated supply chain attacks ever documented against a top-10 npm package." The attack was pre-staged across 18 hours. Three payloads were pre-built for three operating systems. Both release branches were poisoned within 39 minutes. Every artifact was designed to self-destruct.

The malicious versions were live for approximately three hours before npm removed them. Anyone whose CI/CD pipeline or development environment pulled a fresh install during that window should assume full system compromise and rotate all secrets.

The convergence matters because VentureBeat explicitly flagged that anyone who installed Claude Code via npm on 31 March between 00:21 and 03:29 UTC could have pulled the compromised axios version as a transitive dependency. The Claude Code source leak and the axios supply chain attack are not related incidents, but they collided on the same day on the same registry, compounding the risk for the same developers.

Three exposures in six days

The Claude Code source leak is not an isolated event. Five days earlier, on 26 March, Fortune reported that Anthropic had left approximately 3,000 files in an unsecured, publicly searchable data store. Among them was a draft blog post describing Claude Mythos, a new model tier above Opus (codename Capybara, part of a tier called Mythos) that Anthropic's own documentation described as posing "unprecedented cybersecurity risks." Roy Paz, a senior AI security researcher at LayerX, independently located and reviewed the exposed documents.

Three data exposures in under a week. An unsecured data lake. A source map that shipped twice in fourteen months. These are not sophisticated attacks against Anthropic's infrastructure. They are configuration errors, packaging mistakes and missing ignore rules. The kind of operational hygiene that Anthropic's own AI coding tool is designed to catch in other people's code.

What defenders should do now

The immediate actions are straightforward. If you installed or updated any npm packages on 31 March between 00:21 and 03:29 UTC, check your lockfiles for axios@1.14.1, axios@0.30.4 or plain-crypto-js. If found, treat the machine as compromised. Rotate every credential that was accessible from that environment.

For Claude Code specifically, Anthropic recommends migrating to the native installer (curl -fsSL https://claude.ai/install.sh | bash) rather than npm. The native binary does not depend on the npm dependency chain and supports automatic updates.

More broadly, pin your dependencies. Use lockfiles. Audit transitive dependencies. None of this is new advice, but when a single day produces both a source code exposure and a supply chain RAT on the world's most popular HTTP client library, the advice bears repeating.

Operational security is not a feature flag

We examined the methodology behind Claude Code Security when it launched in February, asking whether the same AI that writes code should also audit it. The source leak adds a different dimension to that question.

The technical revelations from the Claude Code source are genuinely interesting. The memory architecture is clever. The daemon mode is ambitious. The internal metrics are refreshingly honest about the limitations of current models. Under different circumstances, publishing these details voluntarily would have burnished Anthropic's reputation for transparency.

But these details leaked because someone did not add *.map to a configuration file. The same mistake that leaked the same product fourteen months ago. The company that builds tools to find bugs in other people's code keeps shipping its own source to a public registry by accident.

Anthropic positions itself as the safety-conscious AI company, the one that publishes responsible scaling policies, runs red teams and talks openly about existential risk. That positioning gets harder to maintain when the operational security failures are this basic and this repetitive. You cannot build trust in AI systems on a foundation of forgotten .npmignore entries.

Newsletter

One email a week. Security research, engineering deep-dives and AI security insights - written for practitioners. No noise.